I have a confession to make. I think I invented the term ‘Central Bank Digital Currency’.

It was in a blog post I published back in 2015.

I should stress that I didn’t invent the concept. That was probably J P Koning. Or maybe Robert Sams. But the name? I believe that was me.

I was reminded of this claim to fame at last week’s excellent ‘Digital Money’ event in London, organised by the Digital Pound Foundation and hosted by Clifford Chance.

I had been invited to give the closing address and was in the audience for the previous session. The speakers were Andrew Buckley and William Lorenz, and they delivered a masterclass in the art of product introduction, using history’s rich list of failed products as guides to the future.

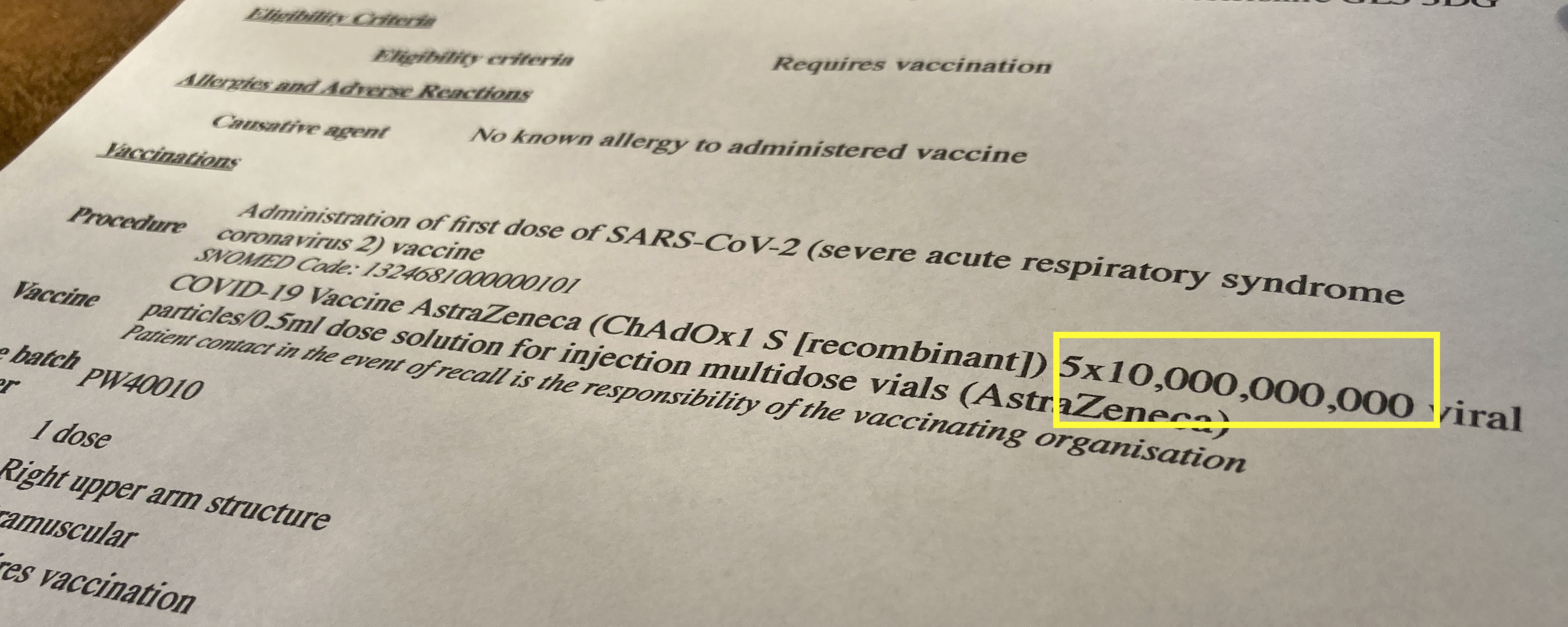

Andrew talked about the power of ‘showing, not telling’ when soliciting feedback from consumers on potential new products, and how provoking a visceral response can be very powerful in that regard.

He gave the example of a hypothetical CBDC that enforces a personal carbon budget. Indeed, it’s hard to imagine something more viscerally controversial and hence likely to provoke a response. And, as he explains in this paper (p9), the way he made it real for his audience was to put it in terms of a monthly ‘meat budget’.

I wasn’t 100% sure everybody heard the bit about this being a deliberately controversial and theoretical example. So I felt compelled to clarify to the audience at the start of my talk that ‘digital meat rations’ are not what I was thinking of when I invented the term ‘Central Bank Digital Currency’ back in 2015…

But it triggered an interesting question. When I coined that phrase, what was I thinking of? I figured I should go back and re-read what I’d posted.

What problem did I think a CBDC would solve back in 2015?

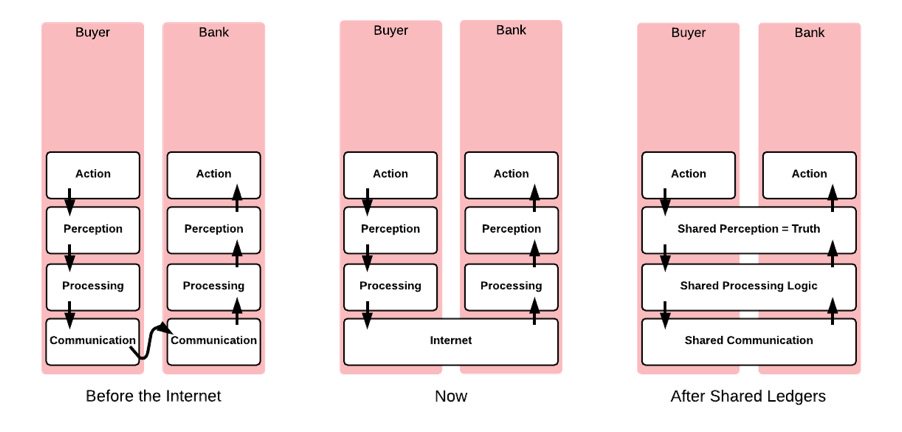

It turns out I was thinking about the world of permissionless blockchains, smart contracts, and what might happen if regulated financial institutions started to use them.

My thought experiment centred on a smart contract that implemented an insurance market. Imagine a bunch of investors who provide risk capital. And a bunch of customers seeking to insure a risk, with the smart contract managing the process and holding the premiums.

For example, you could imagine the contract paying out to a farmer if they provided a digitally signed proof of catastrophic weather in their region. There were a lot of fun ideas like this kicking around back in 2015…

I added a prediction: I figured in the future that users would want to transact in real-world currencies – dollars, euros, pounds – rather than crypto tokens. So there would need to be some on-ledger representation. Perhaps there would be ‘Barclays GBP’ tokens and ‘HSBC GBP’ tokens and so forth.

I should add that the world’s first stablecoin – Tether, or ‘Realcoin’ as it was back then – did exist by this time, but it hadn’t yet gained much adoption and I wasn’t aware of it, so I was thinking in terms of regular banks as the issuers of GBP- or USD-denominated liabilities. (How naïve I was…)

Anyway, the blog post asked: how on earth would this all work if the smart contract ended up holding a bunch of different GBP tokens? If I paid in HSBC-GBP but received my payout as a basket of Lloyds-GBP and Barclays-GBP would I be happy with that? How would I redeem these tokens if I didn’t have an account with those banks? What would happen if one of the coins stopped trading at par? Would the insurance market smart contract somehow have to deal with this complexity? It would be a nightmare!

So I argued that a universally accepted, high-quality, low-risk digital currency could be extremely useful… perhaps one issued by a currency area’s central bank. Hence a Central Bank Digital Currency.

As we now know, history took a different path. Commercial banks did not pursue this specific opportunity, but the demand for real-world currency tokens turned out to be real, and stablecoin issuers have captured that market. The product-market fit is insanely good.

I also find it interesting that the proliferation of competing tokens I foresaw also came to pass. We don’t just have one USD stablecoin; we have a whole bunch of them. And they do sometimes trade slightly away from par. But I was wrong to predict that anybody would actually care..!

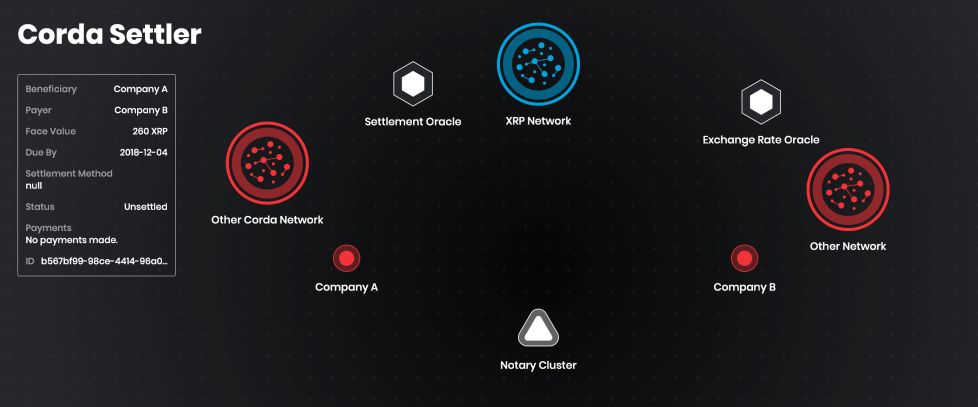

But perhaps the main reason I was so wrong about the role of commercial banks and the opportunity for a central bank digital currency was because the regulated institutions mostly steered clear of the permissionless blockchains. Instead, they started their work on permissioned platforms, such as the Corda platform I helped build at R3, or one of Digital Asset’s platforms, or one of the Hyperledger stable.

It is quite possible that these worlds – public and private – are on a convergence path. Imagine a permissioned ‘L2’ or ‘subnet’ consisting of an ecosystem of high-quality, full-production, regulated networks interacting safely with the permissionless world. The permissioned world could access new distribution channels, and permissioned networks’ demand for high-quality cash settlement assets might drive additional demand for high quality stablecoins. But, right now, in 2024, the majority of blockchain deployments by regulated financial institutions are in the permissioned space.

And there’s a curious and under-studied detail about these deployments: almost every single one is its own standalone network. Yes – each network is decentralised, or decentralizable. Yes – each deployment consists of a network of nodes operated by or for a range of market participants. Yes – these networks drive up consistency, enable near real-time exchange of value, and have modernised multiple markets in deep ways.

But they look very different: in the permissionless world, there is a small number of large, shared, multi-purpose networks such as Ethereum, Avalanche, Solana and so forth, where a large number of independent projects and contracts are deployed and co-exist. Whereas in the permissioned world, we see the opposite: a large number of small, single-purpose networks.

This isn’t how I thought things would play out: we designed Corda to enable multiple applications to coexist on the same network, just like what happens in the permissionless realm. But it’s an understandable phenomenon: the obligations and constraints faced by regulated institutions means they require far more control over their networks than is available when sharing power, control and governance amongst a much larger group. So it’s a perfectly logical place to start if you’re a regulated institution: build your network as fast and as well as you can, gain customers, deliver value, and then start to connect in to other networks, ‘peering’ with them in effect, building out a network of networks over time, in much the same way the internet evolved.

It’s possible this is a point-in-time phenomenon, and these networks will themselves eventually converge – or consolidate onto a smaller number of shared networks. But it does cause a problem in the meantime: settlement. If every such network had a cash asset deployed to it the fragmentation of liquidity would be ridiculous. So what we see today in the permissioned space is all about interoperability. For example, a digital asset network such as HQLAx (running Corda) can connect to the high-quality cash network Fnality (running Hyperledger Besu). Indeed, Fnality holds all its assets at a central bank and so can perhaps be best described as a private-sector-led synthetic CBDC.

And I think it’s this approach we’re going to see more of: a concentration of cash liquidity. That is: one or more single-purpose high-quality cash-settlement networks in a currency area will achieve critical mass, and atomic settlement of regulated digital asset trades will happen via cross-chain interoperability protocols.

And this brings the question I asked in 2015 back to the fore: if lots of different networks, with lots of different users, are going to transact amongst themselves, what form of digital currency will they ultimately use? My answer back then was something that is high-quality and that they can all trust. Hence a digital currency that is backed by a central bank. And, today, that could be either directly or via a private-sector-led synthetic equivalent.

If so, I’ll settle for that. Anything to avoid going down in history as the enabler of ‘digital meat rations’…